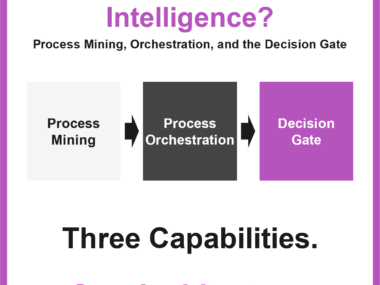

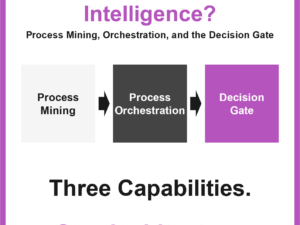

Process Intelligence Explained: Mining, Orchestration, and the Decision Gate

Process intelligence is not a single tool. It combines process mining, process orchestration, and a decision gate into one architecture that shows how processes really run, governs what happens next, and keeps automation and AI inside auditable boundaries.