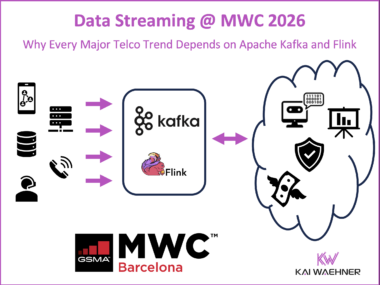

Data Streaming at MWC 2026: How Apache Kafka, Flink and Agentic AI Power Telecom Trends

Mobile World Congress (MWC) 2026 highlights the shift from batch systems to real time data streaming in telecom. AI and agentic automation, network APIs, sovereign cloud, autonomous networks, and 5G monetization all depend on continuous, governed data flows at scale. A Data Streaming Platform built on Apache Kafka and Apache Flink enables operators to collect, process, and act on live data across network and business systems. It provides the foundation for applied AI, SLA enforcement, API monetization, and usage based billing. MWC shows that telecom innovation and measurable business value now rely on real time data streaming.