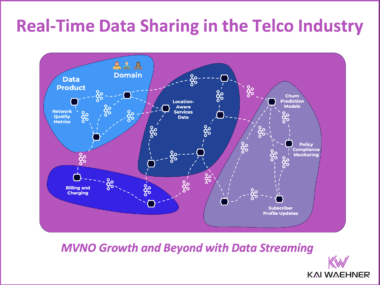

Real-Time Data Sharing in the Telco Industry for MVNO Growth and Beyond with Data Streaming

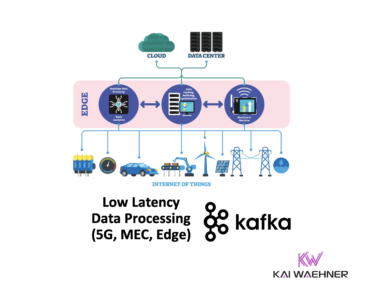

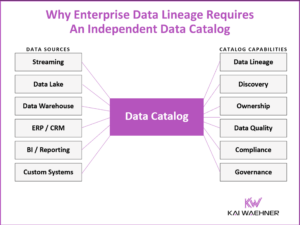

The telecommunications industry is transforming rapidly as Telcos expand partnerships with MVNOs, IoT platforms, and enterprise customers. Traditional batch-driven architectures can no longer meet the demands for real-time, secure, and flexible data access. This blog explores how real-time data streaming technologies like Apache Kafka and Flink, combined with hybrid cloud architectures, enable Telcos to build trusted, scalable data ecosystems. It covers the key components of a modern data sharing platform, critical use cases across the Telco value chain, and how policy-driven governance and tailored data products drive new business opportunities, operational excellence, and regulatory compliance. Mastering real-time data sharing positions Telcos to turn raw events into strategic advantage faster and more securely than ever before.