AI agents are only as good as the data they act on. The observation sounds obvious but its implications are routinely ignored when teams move from AI prototype to production. The prototype works beautifully in a demo because it operates on clean, curated, low-volume sample data. The production system fails to deliver because it is asked to reason over raw, high-volume, noisy event streams with no filtering, no enrichment, and no signal extraction in between.

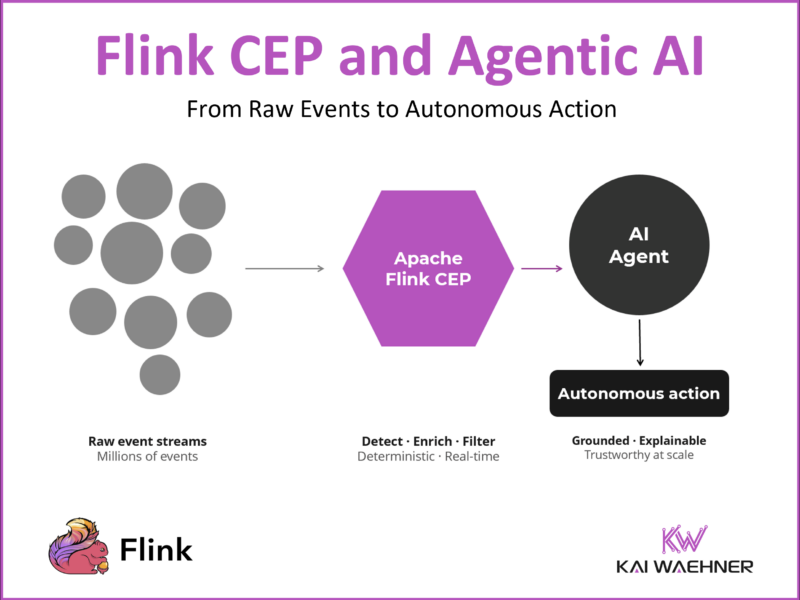

This article is about closing that gap. Specifically, it is about why Complex Event Processing (CEP) with Apache Flink is the missing layer between your data streams and your AI agents, and how combining Flink CEP with the right downstream architecture produces a system that is not just technically sound but trustworthy enough for enterprise production.

Flink CEP is Apache Flink’s built-in Complex Event Processing library that detects predefined event sequences in real-time data streams. If you are not yet familiar with how it works technically, the companion article Complex Event Processing (CEP) with Apache Flink: What It Is and When (Not) to Use It covers the foundations: the Pattern API, MATCH_RECOGNIZE, the missing event problem, the vendor landscape, and best practices. This article builds on that foundation and focuses on the architectural integration with Agentic AI.

The Problem: Raw Streams Overwhelm AI Agents

The default assumption when connecting AI agents to real-time data is straightforward: put a message broker on one side, put the agent on the other, and let the agent react to events. In practice this approach fails at enterprise scale for three compounding reasons.

Volume. A mid-sized e-commerce platform processes hundreds of thousands of transactions per hour. A telco network generates millions of signaling events per minute. An industrial IoT deployment produces billions of sensor readings per day. An AI agent cannot evaluate every single one of those events meaningfully. The overhead of processing raw, high-cardinality streams through an AI reasoning layer produces noise, not intelligence.

Cost. LLM inference is not free. At scale, the cost of running every raw event through a model compounds quickly. The cost per decision compounds fast. Enterprises that have run this experiment at scale have found it unsustainable before they even reach production load. Reducing the volume of events that reach the LLM is not just a technical optimization. It is a financial requirement.

Hallucination and unreliability. LLMs reason well when given specific, grounded, well-scoped inputs. They reason poorly when given ambiguous, high-noise, context-free streams of raw operational data. A language model presented with a stream of transaction events without structured context about what the pattern means, what the business rules are, and what normal state looks like will hallucinate with confidence. The problem is not the model. The problem is the input.

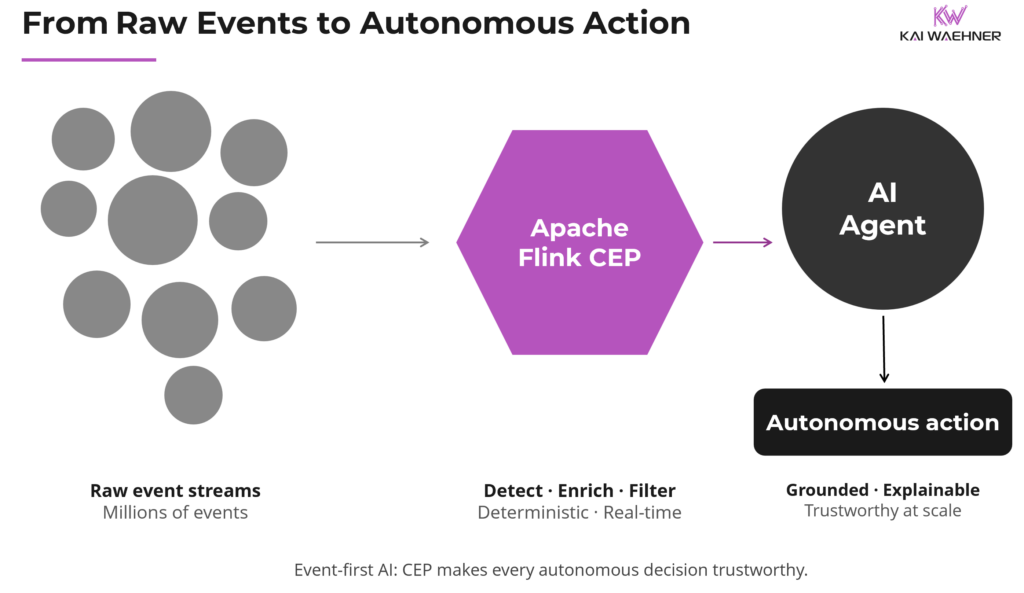

The architectural conclusion is simple: AI agents should not be the first processor of raw event streams. Something needs to happen before the agent sees the data. Flink CEP is that something.

How Flink CEP Filters Event Streams for AI Agents

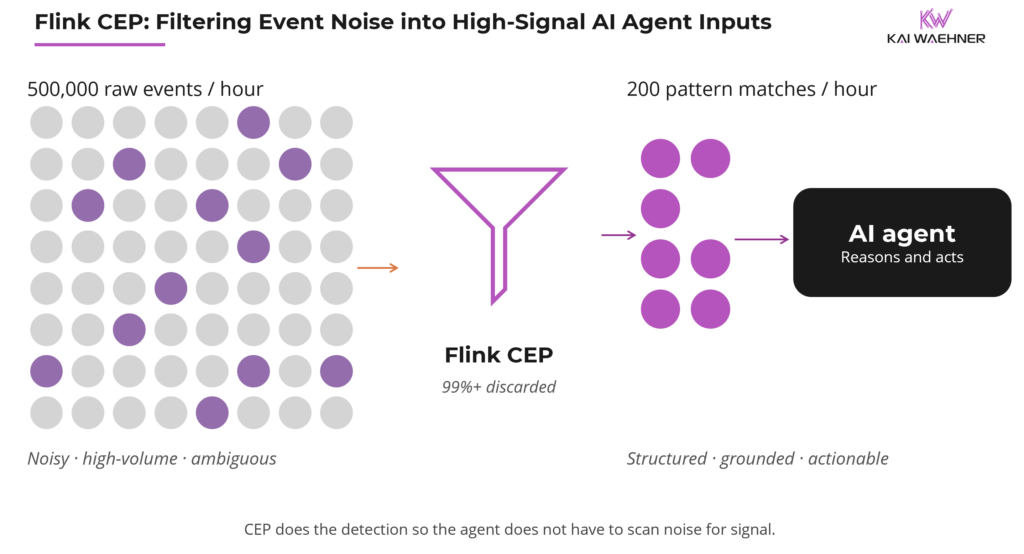

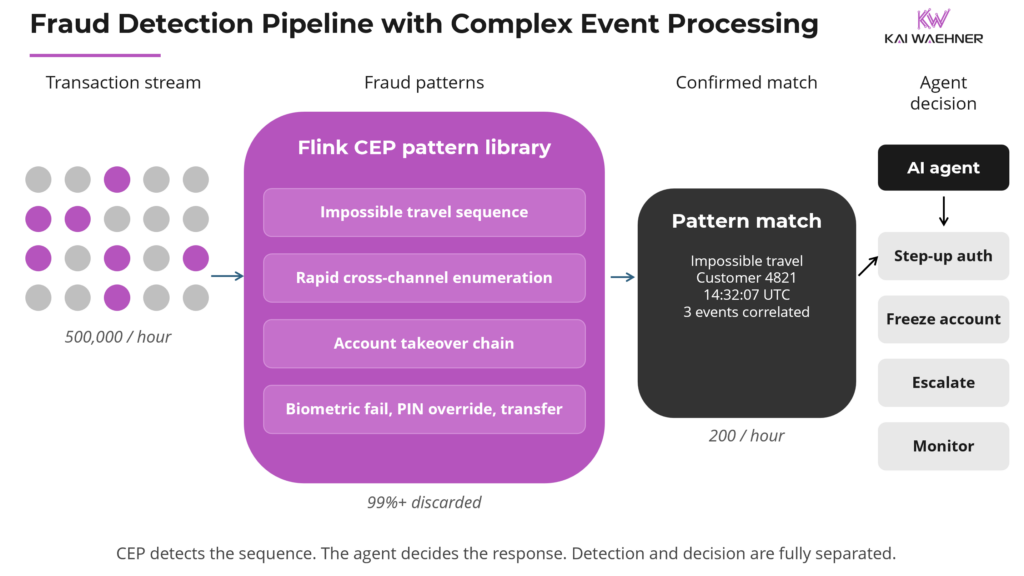

A payment platform processing 500,000 transactions per hour might generate 200 confirmed fraud pattern matches per hour. This is a reduction of more than 99 percent before any LLM is involved. The AI agent receives 200 specific, grounded, actionable inputs per hour instead of half a million ambiguous raw events. The economics of LLM inference look completely different at those two volumes.

This volume-to-signal transformation is the core value of Flink CEP in an Agentic AI architecture. CEP continuously evaluates every incoming event against every active pattern and emits a result only when a complete match is found. Everything else is discarded. The output is not a stream of raw events. It is a stream of structured, semantically rich pattern-match events, each one representing a confirmed detection of something that matters.

Instead of “here is a stream of transactions, figure out which ones are suspicious,” the agent receives “a three-event fraud sequence matching the impossible travel pattern was detected at 14:32:07 for customer 4821, here are the correlated event details.” The agent now has a specific, grounded, actionable input. Its job is to reason about what to do, not to scan noise for a signal that CEP has already found.

Why Flink CEP Makes Agentic AI Trustworthy and Cost-Effective

Two properties of this approach matter for enterprise trust.

Determinism where it matters. CEP pattern detection is deterministic. Given the same event sequence, the same pattern fires every time. There is no probabilistic variance, no hallucination, no model drift. For regulated industries where audit trails and explainability are required, the fact that the detection step is rule-based and reproducible is a significant compliance advantage. The AI agent adds reasoning and judgment on top of a deterministic detection foundation, rather than being the sole source of both detection and decision.

Cost proportionality. LLM inference is invoked only when a pattern match has already been confirmed. The cost of CEP evaluation is orders of magnitude lower than LLM inference. By filtering the event stream through Flink CEP first, the system spends expensive AI inference only on events already identified as worth investigating. Depending on pattern selectivity, the reduction in events reaching the AI layer can be dramatic, sometimes by orders of magnitude.

The Flink CEP and Agentic AI Architecture: Detect, Enrich, Decide

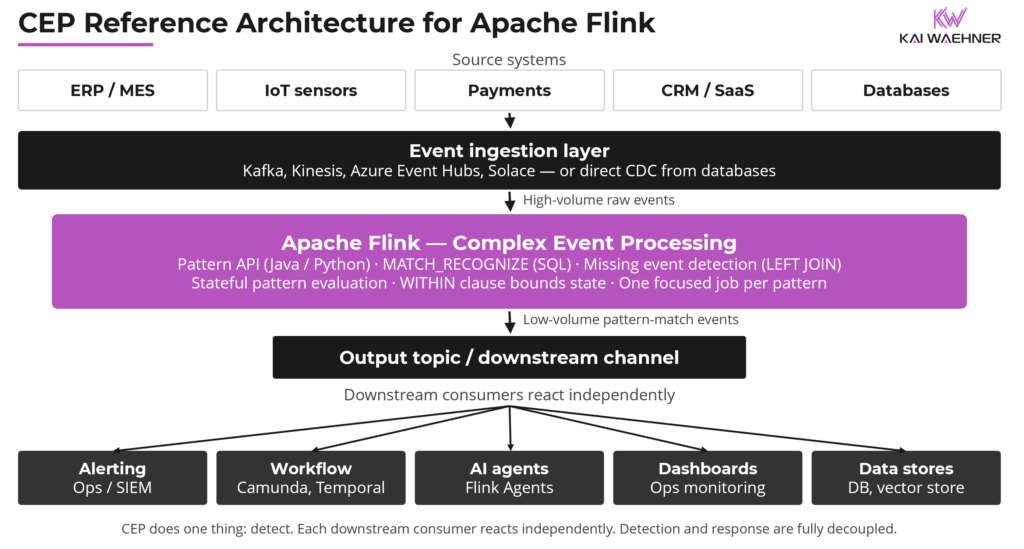

The complete architecture has four layers, each with a clear and non-overlapping responsibility.

Layer 1: Event Ingestion

Before Flink CEP can detect anything, events need to flow from source systems into the processing layer. Apache Kafka is the recommended approach for most enterprise scenarios: it decouples producers from consumers, provides durability and replay, enforces governance through schema validation and lineage tracking, and ensures every downstream system works from the same consistent, ordered event log.

Kafka is not the only option. Other event-driven messaging platforms are valid alternatives depending on existing infrastructure: Solace is strong in financial services and high-performance scenarios, AWS EventBridge and Azure Event Hubs serve cloud-native architectures well, Google Pub/Sub, Redpanda, and Apache Pulsar each have their own positioning and strengths. All of these can feed Apache Flink for CEP processing through Flink’s connector ecosystem. The Data Streaming Landscape 2026 explores the vendors in more detail.

A third path, often overlooked, is direct ingestion into Flink without a message broker at all. Flink’s CDC connectors, including Debezium-based connectors and the native Flink CDC framework, can pull events directly from operational databases: PostgreSQL, MySQL, Oracle, SQL Server, MongoDB, and others. This is particularly relevant when source systems are databases rather than event-producing applications, and when adding a messaging layer is not justified by the use case. The CEP processing is identical regardless of ingestion path.

Layer 2: Flink CEP Pattern Detection

Apache Flink subscribes to the event source, continuously evaluates incoming events against defined patterns using the Pattern API or MATCH_RECOGNIZE, and publishes structured result events to an output destination when a pattern match is confirmed.

The CEP job does one thing: detect. It does not enrich, does not decide, does not call external systems. Its output is a clean, low-volume stream of confirmed pattern detections, each carrying the matched events and their timestamps.

I covered the Flink CEP concepts in more detail here: When (Not) To Use Complex Event Processing with Apache Flink.

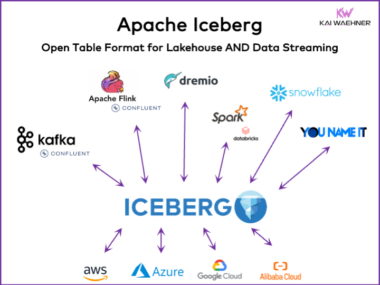

Layer 3: Enrichment and Serving

The CEP output needs somewhere to land and be enriched with business context before it reaches an AI agent. The right sink depends on the use case and the downstream consumer. Common patterns: write CEP output to a relational database for structured storage and synchronous query access; write to a document store like MongoDB or Elasticsearch for flexible, low-latency retrieval; write to a vector store when the downstream agent uses semantic search or retrieval-augmented generation; write to a feature store when the CEP output feeds ML models; or maintain continuously updated materialized views using additional Flink jobs that join CEP output with reference data.

Several vendors offer purpose-built serving layer capabilities designed to bridge streaming data and AI agents. The common architectural principle across all of them is the same: Flink produces the processed, enriched signal; the serving layer makes it accessible to agents in a consumable form; MCP, REST, or a direct query interface provides the agent-facing access layer. As discussed in the MCP vs. REST vs. Kafka article, MCP is a useful standardized interface for agent tool access but it is not a data governance mechanism. Data consistency and freshness are properties of the streaming and serving layers underneath.

Layer 4: Apache Flink Agents

Flink Agents is one consumer option for CEP output, not a requirement. The architecture works today with any downstream consumer: a Kafka topic, a workflow engine, an alerting system, a simple microservice. Flink Agents adds the ability to move that consumer logic onto the streaming runtime itself. You can start simple and add agents later without changing the detection layer.

Apache Flink Agents, formalized as FLIP-531, enables natively event-driven AI agents built directly on Flink’s streaming runtime. Recent releases introduced exactly-once consistency for agent actions and native MCP tool invocation. Commercial Flink distributions have begun shipping their own streaming agent capabilities on top of this foundation.

These are not chatbots waiting for a user prompt. They are autonomous, always-on agents that react to events. A CEP pattern-match event triggers an agent. The agent receives the pattern details, queries the enrichment layer for business context, reasons about the appropriate response, and executes an action within defined boundaries.

The streaming runtime is the right foundation for this class of agent. It inherits Flink’s core properties: low-latency processing, fault tolerance, stateful computation, and the ability to handle millions of events per second. Agents built here are always on and always operating on the most current data. They do not wait to be prompted. They react to the world as it changes.

One note on maturity: Flink Agents is still a preview sub-project with experimental APIs. Commercial distributions offer their own streaming agent previews on top. Production-critical workloads should evaluate readiness carefully. The architectural direction is clear and moving fast.

Flink CEP in Practice: Financial Services and Manufacturing

Two industries where Flink CEP delivers immediate and measurable value: financial services, where fraud is a sequence not a single event, and manufacturing and supply chain, where process deviations need to be caught in real time not retrospectively.

Financial Services: From 500,000 Transactions to 200 Actionable Signals

A large payment platform cannot route every transaction to an AI agent for fraud evaluation. The cost is prohibitive and the noise-to-signal ratio makes reliable decisions impossible.

With Flink CEP in the pipeline, the raw transaction stream is evaluated continuously against a library of fraud patterns: impossible travel sequences, rapid cross-channel enumeration, account takeover chains, velocity patterns across merchant categories. The vast majority of transactions match no pattern and are discarded immediately. The confirmed pattern matches that do emerge are a fundamentally different kind of data: structured, timestamped, correlated across the specific events that triggered the match, and already identified as significant before any AI system is involved.

Each confirmed match arrives at the agent layer with the full matched event sequence, the pattern type, and the business context from the enrichment layer: the customer’s transaction history, their usual geographic range, any recent account changes, open disputes. The agent reasons about the specific situation and decides: step-up authentication, account freeze, pass-through with monitoring, or escalation to a human analyst. The decision is grounded, explainable, and made within seconds of the pattern completing.

The fraud pattern library itself needs to evolve as attack methods change. Updating CEP rules without restarting the running job means new patterns take effect in minutes rather than requiring a full redeployment cycle. In fraud detection, that window matters.

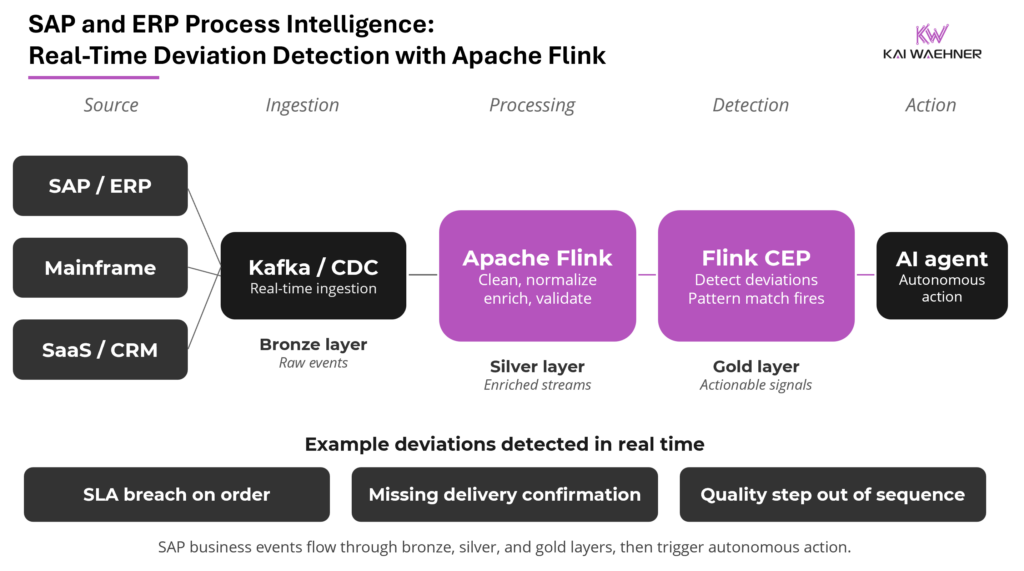

Manufacturing and ERP: SAP Process Intelligence in Real Time

Manufacturing and supply chain operations are among the most process-intensive environments in the enterprise. Every production order, inventory movement, and delivery confirmation represents a business event with an expected sequence, a defined SLA, and real consequences when something goes wrong.

SAP ERP is the system of record for business processes at thousands of large enterprises. Every operational process generates a continuous stream of business events. In traditional architectures that data sits inside SAP, accessible only through batch extracts or expensive API calls. Business process analysis is done retrospectively in tools like Celonis or SAP Signavio. By the time a deviation surfaces, the window to act has already closed.

The event-driven alternative streams SAP events in real time via Kafka or Flink CDC connectors: production orders, inventory movements, delivery confirmations, quality inspection results, financial postings. This is the bronze layer: raw operational event data flowing out of SAP as it happens, without impacting SAP performance.

Flink transforms bronze into silver: cleaned, normalized, enriched event streams joined with reference data and validated for quality. CEP patterns applied to the silver layer detect process deviations in real time. A production order missing its inventory confirmation within the SLA window. A quality inspection failure after a step that should have caught the issue earlier. A delivery sequence arriving out of order. CEP detects each as it unfolds, not hours later in a batch report.

When CEP fires, the agent receives both the pattern details and the full business context: where is this order in its lifecycle, what is the inventory position, what is the supplier’s on-time delivery history. The result is process intelligence that was previously only available retrospectively, now delivered in real time while there is still time to act.

The same architecture applies to IBM mainframe environments, CRM platforms like Salesforce, ITSM platforms like ServiceNow, and any other system generating business process events.

CEP, LLM, and ML: Three Tools, Three Decisions

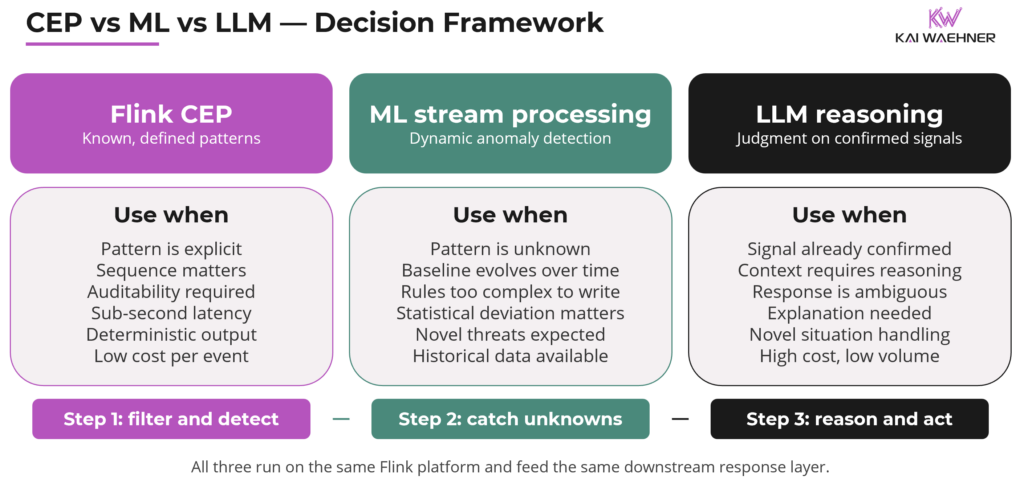

CEP, ML-based anomaly detection, and LLM reasoning are complementary capabilities for different parts of the same problem, not competing alternatives:

- Flink CEP works best for known, defined patterns where determinism and auditability matter.

- ML-based stream processing fits dynamic anomaly detection when the pattern space is too complex or too evolving to define as explicit rules. The model learns what normal looks like and flags deviations in the live stream.

- LLMs handle reasoning and judgment once a signal has been confirmed. Detection is not their job. Deciding what to do about a confirmed detection is.

The practical sequence: Flink CEP first to reduce volume and increase precision. ML for patterns too dynamic to specify as explicit rules. LLM reasoning applied last, only to the confirmed, enriched, context-rich signals that remain.

The result is a system that is cost-effective, reliable, and explainable. Raw-stream-to-LLM architectures cannot structurally deliver any of those three properties. Event-first architectures deliver all of them.

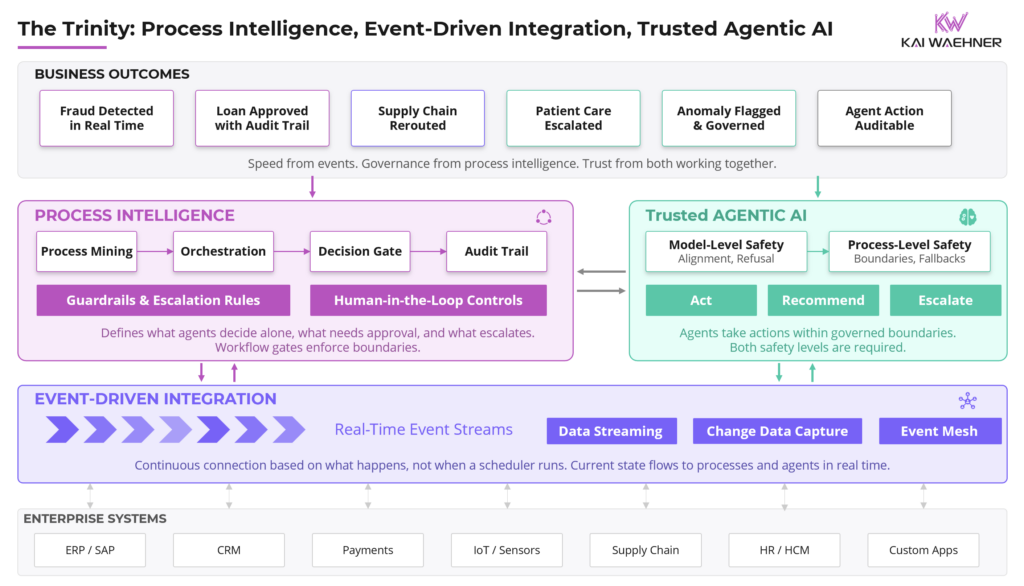

Process Intelligence: What Flink CEP Does Not Replace

Getting this boundary wrong leads to architectures that are either over-engineered or missing critical capabilities.

Flink CEP and Flink Agents are built for real-time, sub-second reaction: detecting patterns, triggering immediate responses, executing autonomous actions at the speed of the event stream. They are not designed for durable, long-running workflows that span hours or days, involve human decision points, require compensation logic when steps fail, or need persistent state tracking across system restarts.

Process orchestration platforms handle exactly that layer: Camunda, Temporal, Apache Airflow, and similar tools. Once Flink CEP detects a fraud pattern and freezes an account, the investigation workflow that follows (notify the customer, escalate to an analyst, collect evidence, resolve) belongs in an orchestration engine. It manages state durably, enforces SLAs across human and automated steps, and provides a complete audit trail.

The separation is clean. Flink owns real-time detection and immediate reaction. Process orchestration owns durable, structured workflows. The two connect through events: Flink CEP publishes a pattern-match event, the orchestration engine consumes it and starts a workflow instance. Neither layer bleeds into the other’s responsibility.

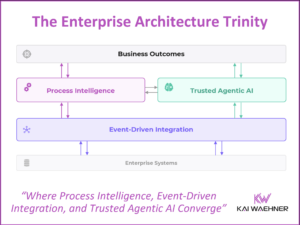

This connects directly to the framework described in The Trinity of Modern Data Architecture. Autonomous AI systems at scale need both layers: real-time event detection to react immediately, and durable process orchestration to manage what follows. A dedicated process intelligence vendor landscape covering orchestration platforms and process mining tools is forthcoming.

Data Governance with Flink CEP Before Agentic AI

Enterprise AI deployments face data governance and sovereignty requirements that generic AI platforms are not designed to handle. Flink’s position as the processing layer before AI is what makes compliance enforceable at the architecture level rather than as an application-layer afterthought.

Before any event reaches an AI agent, Flink can anonymize personally identifiable information, filter data that cannot leave a regulatory jurisdiction, aggregate sensitive signals into compliant summary representations, and enforce schema validation and lineage tracking. These are structural properties of the architecture, enforced in the Flink layer between raw data and AI interface.

For the full decision framework on how MCP, REST, and Kafka fit together as the agent-facing access layer on top of this governed foundation, the MCP vs. REST vs. Kafka article covers that in detail.

Event-First AI Is the Only Architecture That Scales

AI agents that act on raw event streams fail in production. Cost blows up. Decisions become unreliable. Explainability disappears. Governance breaks down.

The architecture that works is event-first. CEP detects meaningful patterns from the raw stream. Enrichment adds real-time business context. The agent receives a clean, governed, high-signal input. It reasons and acts on something worth acting on.

Event-first AI is not AI that happens to use Kafka or Flink somewhere in the stack. It is an architecture where the streaming layer is the foundation of trust. The quality of the agent’s input. The determinism of detection. The freshness of context. The compliance of data access. The auditability of every decision. All of these come from the layers beneath the agent, not from the agent itself.

Apache Flink makes this architecture real. Flink CEP for detection. Stream processing for enrichment. Flink Agents for autonomous response. Each layer has one job. None bleeds into another.

The enterprises that build trustworthy Agentic AI are not the ones that find the best LLM. They are the ones that invest in the data infrastructure that makes any LLM reliable. Event-first architecture, with Flink CEP at its core, is that investment.

Related Articles: Enterprise Agentic AI Architecture

This article is part of a broader set of pieces that together form a framework for enterprise Agentic AI architecture.

The Trinity of Modern Data Architecture establishes the three interdependent pillars that enterprise AI needs: event-driven data integration, process intelligence, and trusted agentic AI. The CEP architecture described in this article sits at the intersection of all three.

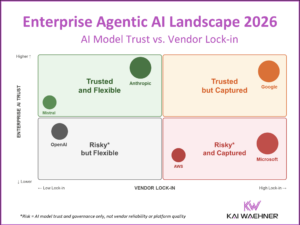

The Enterprise Agentic AI Landscape maps the vendor landscape across trust and flexibility dimensions, providing context for evaluating which AI platforms and agent frameworks can be trusted at enterprise scale.

The MCP vs. REST vs. Kafka architect’s guide covers how the integration and access layer beneath AI agents should be designed, including where MCP fits and where it does not.

Stay informed about the latest thinking on real-time data integration, process intelligence, and trusted agentic AI by subscribing to my newsletter and following me on LinkedIn or X. And download my free book, The Ultimate Data Streaming Guide, a practical resource covering data streaming use cases, architectures, and real-world industry case studies.