Agentic AI is gaining traction as a design pattern for building more intelligent, autonomous, and collaborative systems. Unlike traditional task-based automation, agentic AI involves intelligent agents that operate independently, make contextual decisions, and collaborate with other agents or systems—across domains, departments, and even enterprises.

In the enterprise world, agentic AI is more than just a technical concept. It represents a shift in how systems interact, learn, and evolve. But unlocking its full potential requires more than AI models and point-to-point APIs—it demands the right integration backbone.

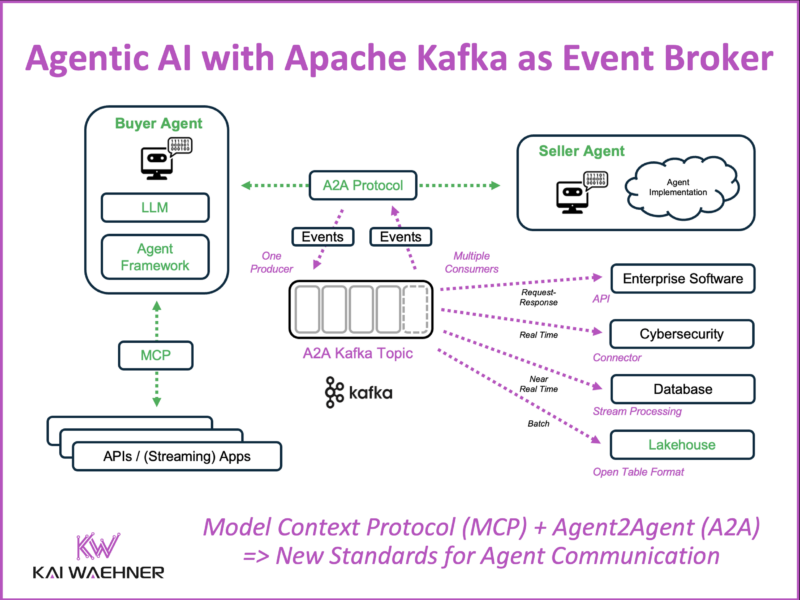

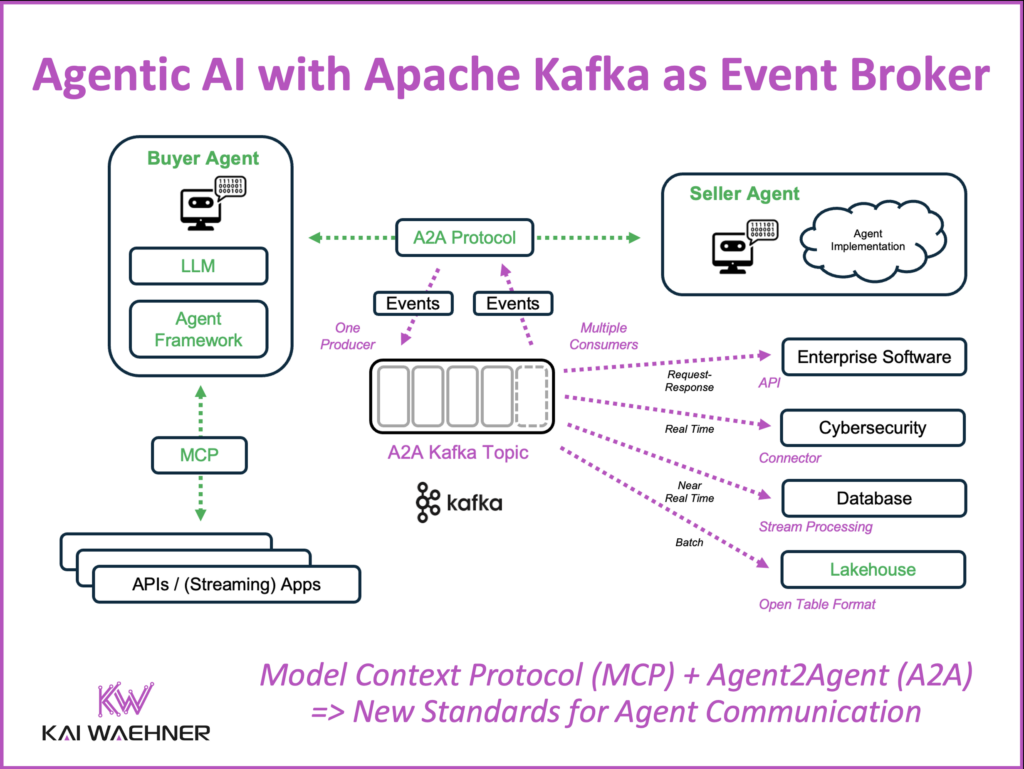

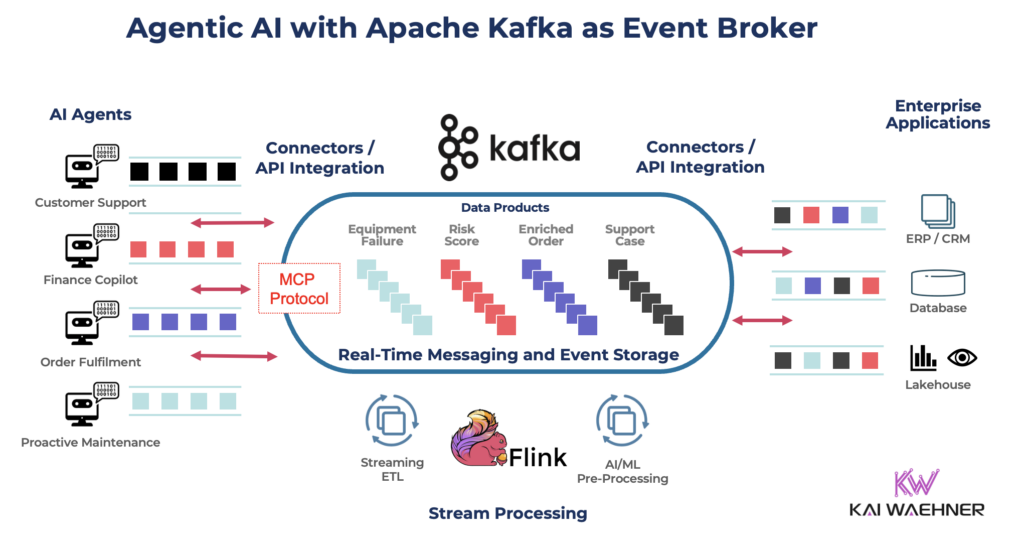

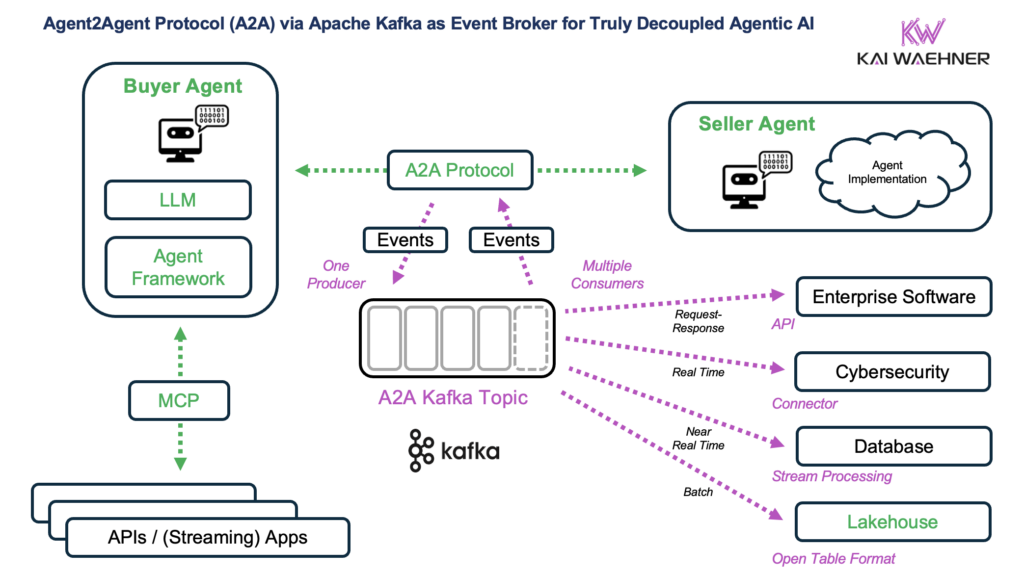

That’s where Apache Kafka as event broker for true decoupling comes into play together with two emerging AI standards: Google’s Application-to-Application (A2A) Protocol and Antrophic’s Model Context Protocol (MCP) in an enterprise architecture for Agentic AI.

Inspired by my colleague Sean Falconer’s blog post, “Why Google’s Agent2Agent Protocol Needs Apache Kafka”, this blog post explores the Agentic AI adoption in enterprises and how an event-driven architecture with Apache Kafka fits into the AI architecture.

Join the data streaming community and stay informed about new blog posts by subscribing to my newsletter and follow me on LinkedIn or X (former Twitter) to stay in touch. And make sure to download my free book about data streaming use cases, including various AI examples across industries.

Business Value of Agentic AI in the Enterprise

For enterprises, the promise of agentic AI is compelling:

- Smarter automation through self-directed, context-aware agents

- Improved customer experience with faster and more personalized responses

- Operational efficiency by connecting internal and external systems more intelligently

- Scalable B2B interactions that span suppliers, partners, and digital ecosystems

But none of this works if systems are coupled by brittle point-to-point APIs, slow batch jobs, or disconnected data pipelines. Autonomous agents need continuous, real-time access to events, shared state, and a common communication fabric that scales across use cases.

Model Context Protocol (MCP) + Agent2Agent (A2A): New Standards for Agentic AI

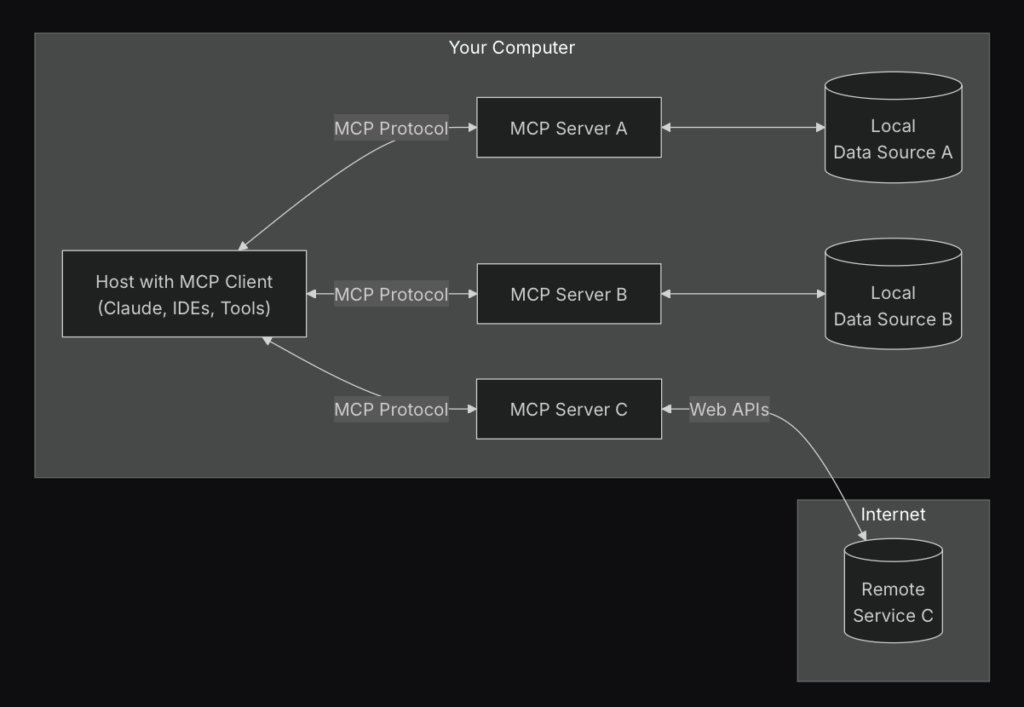

The Model Context Protocol (MCP) coined by Anthropic offers a standardized, model-agnostic interface for context exchange between AI agents and external systems. Whether the interaction is streaming, batch, or API-based, MCP abstracts how agents retrieve inputs, send outputs, and trigger actions across services. This enables real-time coordination between models and tools—improving autonomy, reusability, and interoperability in distributed AI systems.

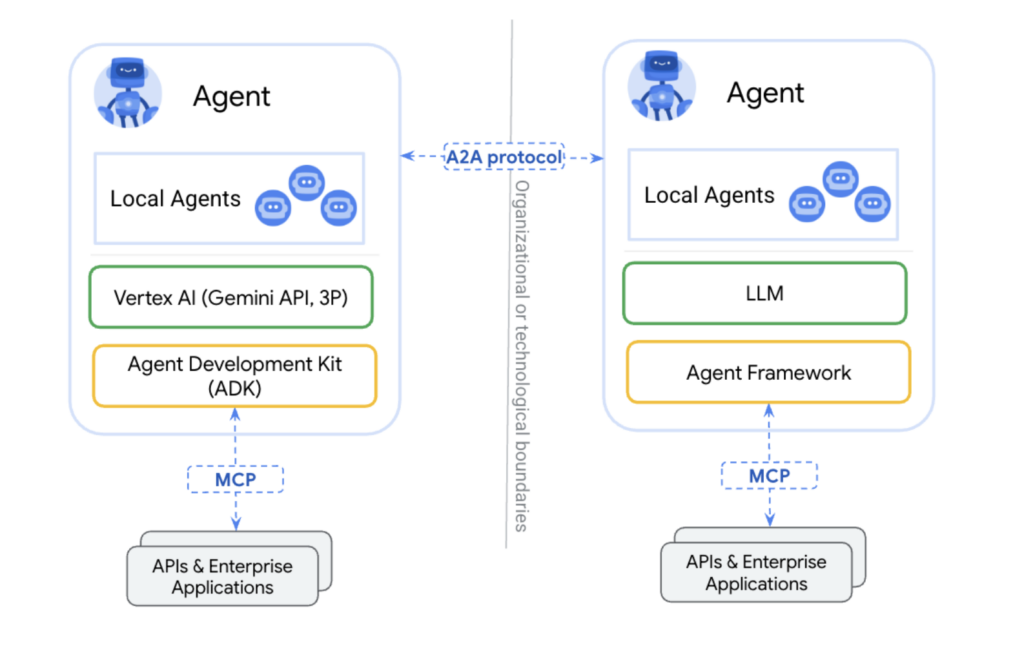

Google’s Agent2Agent (A2A) protocol complements this by defining how autonomous software agents can interact with one another in a standard way. A2A enables scalable agent-to-agent collaboration—where agents discover each other, share state, and delegate tasks without predefined integrations. It’s foundational for building open, multi-agent ecosystems that work across departments, companies, and platforms.

Why Apache Kafka Is a Better Fit Than an API (HTTP/REST) for A2A and MCP

Most enterprises today use HTTP-based APIs to connect services—ideal for simple, synchronous request-response interactions.

In contrast, Apache Kafka is a distributed event streaming platform designed for asynchronous, high-throughput, and loosely coupled communication—making it a much better fit for multi-agent (A2A) and agentic AI architectures.

| API-Based Integration | Kafka-Based Integration |

|---|---|

| Synchronous, blocking | Asynchronous, event-driven |

| Point-to-point coupling | Loose coupling with pub/sub topics |

| Hard to scale to many agents | Supports multiple consumers natively |

| No shared memory | Kafka retains and replays event history |

| Limited observability | Full traceability with schema registry & DLQs |

Kafka serves as the decoupling layer. It becomes the place where agents publish their state, subscribe to updates, and communicate changes—independently and asynchronously. This enables multi-agent coordination, resilience, and extensibility.

MCP + Kafka = Open, Flexible Communication

As the adoption of Agentic AI accelerates, there’s a growing need for scalable communication between AI agents, services, and operational systems. The Model-Context Protocol (MCP) is emerging as a standard to structure these interactions—defining how agents access tools, send inputs, and receive results. But a protocol alone doesn’t solve the challenges of integration, scaling, or observability.

This is where Apache Kafka comes in.

By combining MCP with Kafka, agents can interact through a Kafka topic—fully decoupled, asynchronous, and in real time. Instead of direct, synchronous calls between agents and services, all communication happens through Kafka topics, using structured events based on the MCP format.

This model supports a wide range of implementations and tech stacks. For instance:

- A Python-based AI agent deployed in a SaaS environment

- A Spring Boot Java microservice running inside a transactional core system

- A Flink application deployed at the edge performing low-latency stream processing

- An API gateway translating HTTP requests into MCP-compliant Kafka events

Regardless of where or how an agent is implemented, it can participate in the same event-driven system. Kafka ensures durability, replayability, and scalability. MCP provides the semantic structure for requests and responses.

The result is a highly flexible, loosely coupled architecture for Agentic AI—one that supports real-time processing, cross-system coordination, and long-term observability. This combination is already being explored in early enterprise projects and will be a key building block for agent-based systems moving into production.

Stream Processing as the Agent’s Companion

Stream processing technologies like Apache Flink or Kafka Streams allow agents to:

- Filter, join, and enrich events in motion

- Maintain stateful context for decisions (e.g., real-time credit risk)

- Trigger new downstream actions based on complex event patterns

- Apply AI directly within the stream processing logic, enabling real-time inference and contextual decision-making with embedded models or external calls to a model server, vector database, or any other AI platform

Agents don’t need to manage all logic themselves. The data streaming platform can pre-process information, enforce policies, and even trigger fallback or compensating workflows—making agents simpler and more focused.

Technology Flexibility for Agentic AI Design with Data Contracts

One of the biggest advantages of Kafka-based event-driven and decoupled backend for agentic systems is that agents can be implemented in any stack:

- Languages: Python, Java, Go, etc.

- Environments: Containers, serverless, JVM apps, SaaS tools

- Communication styles: Event streaming, REST APIs, scheduled jobs

The Kafka topic is the stable data contract for quality and policy enforcement. Agents can evolve independently, be deployed incrementally, and interoperate without tight dependencies.

Microservices, Data Products, and Reusability – Agentic AI Is Just One Piece of the Puzzle

To be effective, Agentic AI needs to connect seamlessly with existing operational systems and business workflows.

Kafka topics enable the creation of reusable data products that serve multiple consumers—AI agents, dashboards, services, or external partners. This aligns perfectly with data mesh and microservice principles, where ownership, scalability, and interoperability are key.

A single stream of enriched order events might be consumed via a single data product by:

- A fraud detection agent

- A real-time alerting system

- An agent triggering SAP workflow updates

- A lakehouse for reporting and batch analytics

This one-to-many model is the opposite of traditional REST designs and crucial for enabling agentic orchestration at scale.

Agentic Al Needs Integration with Core Enterprise Systems

Agentic AI is not a standalone trend—it’s becoming an integral part of broader enterprise AI strategies. While this post focuses on architectural foundations like Kafka, MCP, and A2A, it’s important to recognize how this infrastructure complements the evolution of major AI platforms.

Leading vendors such as Databricks, Snowflake, and others are building scalable foundations for machine learning, analytics, and generative AI. These platforms often handle model training and serving. But to bring agentic capabilities into production—especially for real-time, autonomous workflows—they must connect with operational, transactional systems and other agents at runtime. (See also: Confluent + Databricks blog series | Apache Kafka + Snowflake blog series)

This is where Kafka as the event broker becomes essential: it links these analytical backends with AI agents, transactional systems, and streaming pipelines across the enterprise.

At the same time, enterprise application vendors are embedding AI assistants and agents directly into their platforms:

- SAP Joule / Business AI – Embedded AI for finance, supply chain, and operations

- Salesforce Einstein / Copilot Studio – Generative AI for CRM and sales automation

- ServiceNow Now Assist – Predictive automation across IT and employee services

- Oracle Fusion AI / OCI – ML for ERP, HCM, and procurement

- Microsoft Copilot – Integrated AI across Dynamics and Power Platform

- IBM watsonx, Adobe Sensei, Infor Coleman AI – Governed, domain-specific AI agents

Each of these solutions benefits from the same architectural foundation: real-time data access, decoupled integration, and standardized agent communication.

Whether deployed internally or sourced from vendors, agents need reliable event-driven infrastructure to coordinate with each other and with backend systems. Apache Kafka provides this core integration layer—supporting a consistent, scalable, and open foundation for agentic AI across the enterprise.

Agentic AI Requires Decoupling – Apache Kafka Supports A2A and MCP as an Event Broker

To deliver on the promise of agentic AI, enterprises must move beyond point-to-point APIs and batch integrations. They need a shared, event-driven foundation that enables agents (and other enterprise software) to work independently and together—with shared context, consistent data, and scalable interactions.

Apache Kafka provides exactly that. Combined with MCP and A2A for standardized Agentic AI communication, Kafka unlocks the flexibility, resilience, and openness needed for next-generation enterprise AI.

It’s not about picking one agent platform—it’s about giving every agent the same, reliable interface to the rest of the world. Kafka is that interface.

Join the data streaming community and stay informed about new blog posts by subscribing to my newsletter and follow me on LinkedIn or X (former Twitter) to stay in touch. And make sure to download my free book about data streaming use cases, including various AI examples across industries.