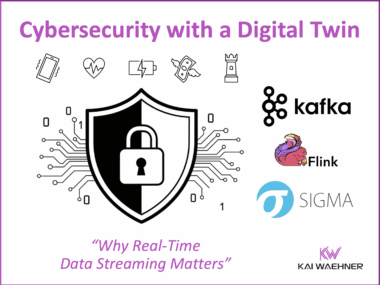

Cybersecurity with a Digital Twin: Why Real-Time Data Streaming Matters

Cyberattacks on critical infrastructure and manufacturing are growing, with ransomware and manipulated sensor data creating severe risks. Digital twins combined with data streaming provide real-time visibility, continuous monitoring, and proactive defense across both IT and OT environments. Using technologies like Kafka, Flink and Sigma, organizations can detect anomalies instantly, strengthen resilience, and secure digital transformation.