Data Streaming in Retail: Social Commerce from Influencers to Inventory

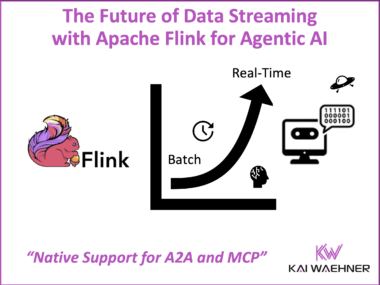

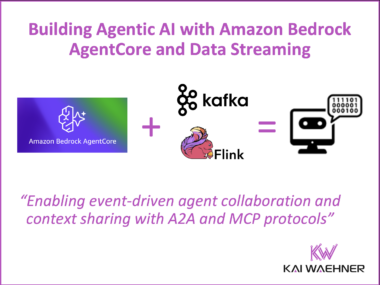

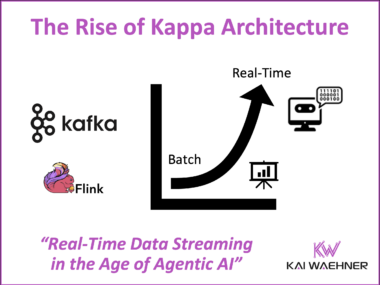

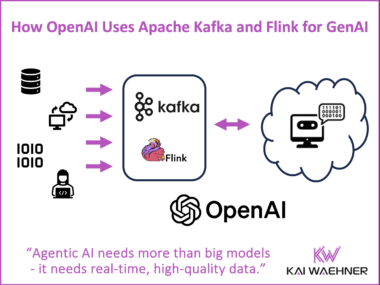

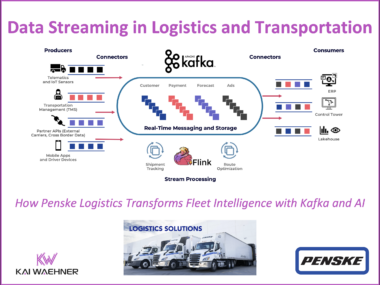

Social commerce is reshaping retail by merging entertainment, influencer marketing, and instant purchasing into one real-time experience. Platforms like TikTok and Instagram have become active digital storefronts where discovery and transactions happen at once. This article explains how data streaming with Apache Kafka and Flink enables retailers to power social commerce through continuous data flow, real-time inventory updates, and personalized engagement. It shows how streaming unifies marketing, operations, and AI-driven decision-making while helping retailers compete with new AI platforms such as OpenAI that are redefining digital shopping.