You want to see an Internet of Things (IoT) example at huge scale? Not just 100 or 1000 devices producing data, but a really scalable demo with millions of messages from tens of thousands of devices? This is the right demo for you! we leveraging Kubernetes, Apache Kafka, MQTT and TensorFlow.

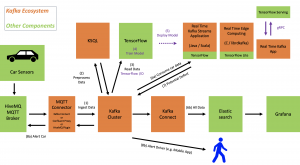

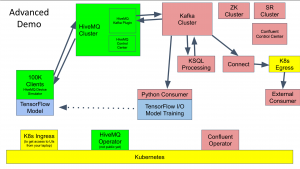

The demo shows how you can integrate with tens or hundreds of thousands IoT devices and process the data in real time. The demo use case is predictive maintenance (i.e. anomaly detection) in a connected car infrastructure to predict motor engine failures:

IoT Infrastructure – MQTT and Kafka on Kubernetes

We deploy Kubernetes, Kafka, MQTT and TensorFlow in a scalable, cloud-native infrastructure to integrate and analyse sensor data from 100000 cars in real time. The infrastructure is built with Terraform. We use GCP, but you could do the same on AWS, Azure, Alibaba or on premises.

Data processing and analytics is done in real time at scale with GCP GKE, HiveMQ, Confluent and TensorFlow I/O for streaming machine learning / deep learning and bi-directional communication in a scalable, elastic and reliable infrastructure:

Github Project – 100000 Connected Cars

The project is available on Github. You can set the demo up in ~30min by just installing a few CLI tools and executing two or three shell scripts.

Check out the Github project “Streaming Machine Learning at Scale from 100000 IoT Devices with HiveMQ, Apache Kafka and TensorFlow“.

Please try out the demo. Feedback and PRs are welcome.

20min Live Demo – IoT at Scale on GCP with GKE, Confluent, HiveMQ and TensorFlow IO

Here is the video recording of the live demo:

You are currently viewing a placeholder content from Default. To access the actual content, click the button below. Please note that doing so will share data with third-party providers.

If your area of interest is Industrial IoT (IIoT), you might also check out the following example. It covers the integration of machines and PLCs like Siemens S7, Modbus or Beckhoff in factories and shop floors:

Apache Kafka, KSQL and Apache PLC4X for IIoT Data Integration and Processing