This blog post explores the state of data streaming in 2023 for digital natives born in the cloud. The evolution of digital services and new business models requires real-time end-to-end visibility, fancy mobile apps, and integration with pioneering technologies like fully managed cloud services for fast time-to-market, 5G for low latency, or augmented reality for innovation. Data streaming allows integrating and correlating data in real-time at any scale to improve the most innovative applications leveraging Apache Kafka.

I look at trends for digital natives to explore how data streaming helps as a business enabler, including customer stories from New Relic, Wix, Expedia, Apna, Grab, and more. A complete slide deck and on-demand video recording are included.

General trends for digital natives

Digital natives are data-driven tech companies born in the cloud. The SaaS solutions are built on cloud-native infrastructure that provides elastic and flexible operations and scale. AI and Machine Learning improve business processes while the data flows through the backend systems.

The data-driven enterprise in 2023

McKinsey & Company published an excellent article on seven characteristics that will define the data-driven enterprise:

1) Data embedded in every decision, interaction, and process

2) Data is processed and delivered in real-time

3) Flexible data stores enable integrated, ready-to-use data

4) Data operating model treats data like a product

5) The chief data officer’s role is expanded to generate value

6) Data-ecosystem memberships are the norm

7) Data management is prioritized and automated for privacy, security, and resiliency

This quote from McKinsey & Company precisely maps to the value of data streaming for using data at the right time in the right context. The below success stories are all data-driven, leveraging these characteristics.

Digital natives born in the cloud

A digital native enterprise can have many meanings. IDC has a great definition:

“IDC defines Digital native businesses (DNBs) as companies built based on modern, cloud-native technologies, leveraging data and AI across all aspects of their operations, from logistics to business models to customer engagement. All core value or revenue-generating processes are dependent on digital technology.”

Companies are born in the cloud, leverage fully managed services, and are consequently innovative with fast time to market.

AI and machine learning (beyond the buzz)

“ChatGPT, while cool, is just the beginning; enterprise uses for generative AI are far more sophisticated.” says Gartner. I can’t agree more. But even more interesting: Machine Learning (the part of AI that is enterprise ready) is already used in many companies.

While everybody talks about Generative AI (GenAI) these days, I prefer talking about real-world success stories that leverage analytic models for many years already to detect fraud, upsell to customers, or predict machine failures. GenAI is “just” another more advanced model that you can embed into your IT infrastructure and business processes the same way.

Data streaming at digital native tech companies

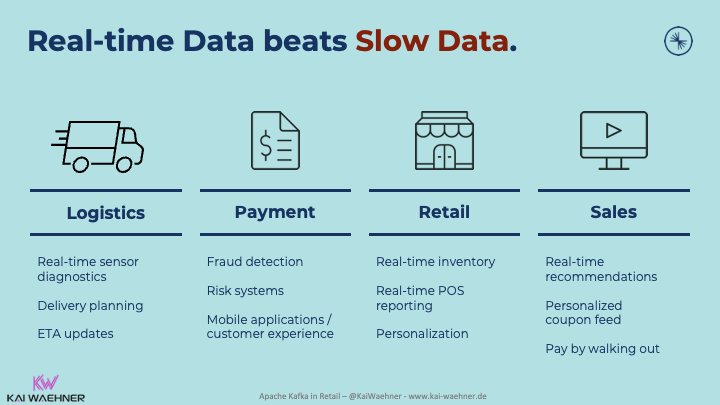

Adopting trends across industries is only possible if enterprises can provide and correlate information correctly in the proper context. Real-time, which means using the information in milliseconds, seconds, or minutes, is almost always better than processing data later (whatever later means):

Digital natives combine all the power of data streaming: Real-time messaging at any scale with storage for true decoupling, data integration, and data correlation capabilities. Apache Kafka is the de facto standard for data streaming.

Data streaming with the Apache Kafka ecosystem and cloud services are used throughout the supply chain of any industry. Here are just a few examples:

Search my blog for various articles related to this topic in your industry: Search Kai’s blog.

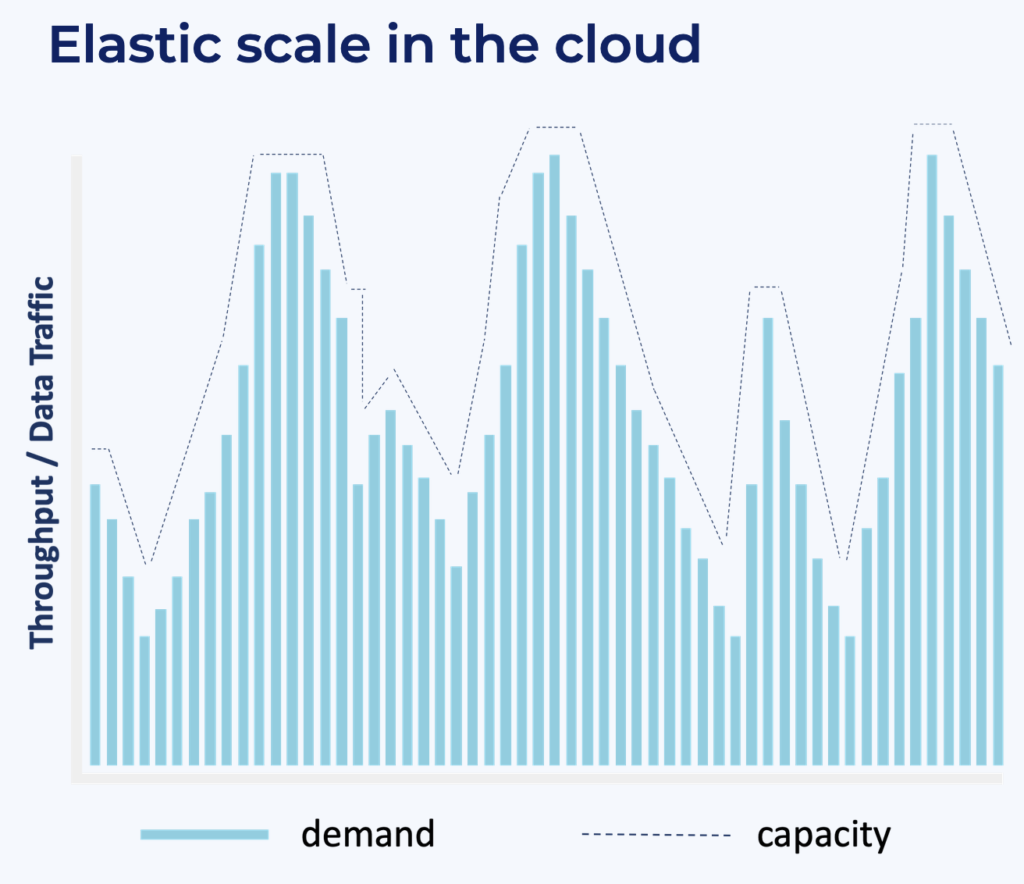

Elastic scale with cloud-native infrastructure

One of the most significant benefits of cloud-native SaaS offerings is elastic scalability out of the box. Tech companies can start new projects with a small footprint and pay-as-you-go. If the project is successful or if industry peaks come (like Black Friday or Christmas season in retail), the cloud-native infrastructure scales up; and back down after the peak:

There is no need to change the architecture from a proof of concept to an extreme scale. Confluent’s fully managed SaaS for Apache Kafka is an excellent example. Learn how to scale Apache Kafka to 10+ GB per second in Confluent Cloud without the need to re-architect your applications.

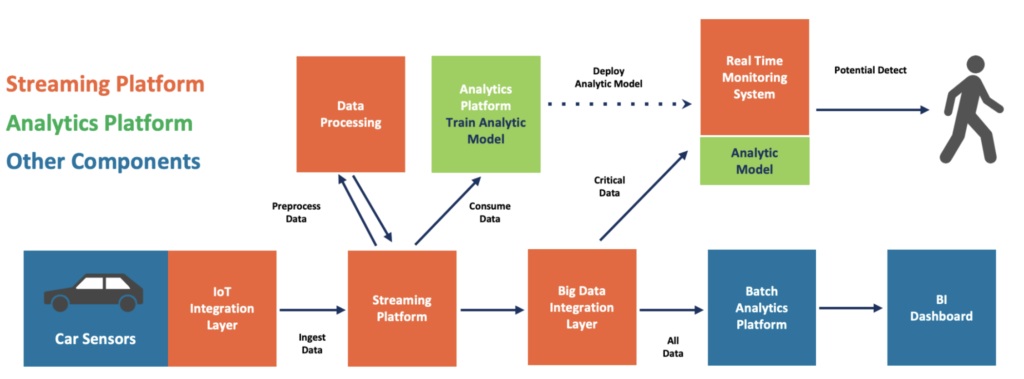

Data streaming + AI / machine learning = real-time intelligence

The combination of data streaming with Kafka and machine learning with TensorFlow or other ML frameworks is nothing new. I explored how to “Build and Deploy Scalable Machine Learning in Production with Apache Kafka” in 2017, i.e., six years ago.

Since then, I have written many further articles and supported various enterprises deploying data streaming and machine learning. Here is an example of such an architecture:

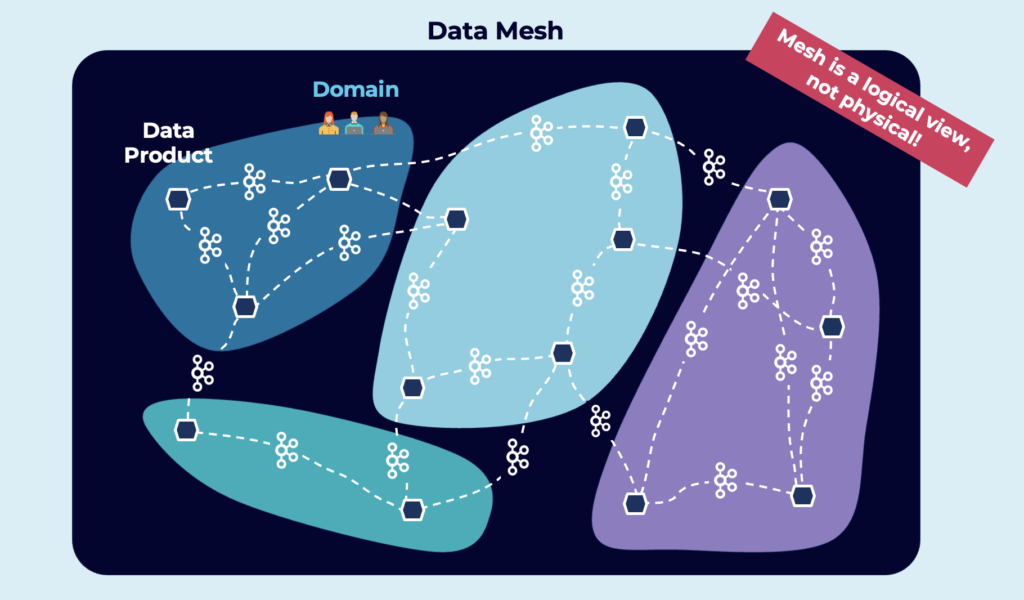

Data mesh for decoupling, flexibility and focus on data products

Digital natives don’t (have to) rely on monolithic, proprietary, and inflexible legacy infrastructure. Instead, tech companies start from scratch with modern architecture. Domain-driven design and microservices are combined in a data mesh, where business units focus on solving business problems with data products:

Architecture trends for data streaming used by digital natives

Digital natives leverage trends for enterprise architectures to improve cost, flexibility, security, and latency. Four essential topics I see these days at tech companies are:

- Decentralization with a data mesh

- Kappa architecture replacing Lambda

- Global data streaming

- AI/Machine Learning with data streaming

Let’s look deeper into some enterprise architectures that leverage data streaming.

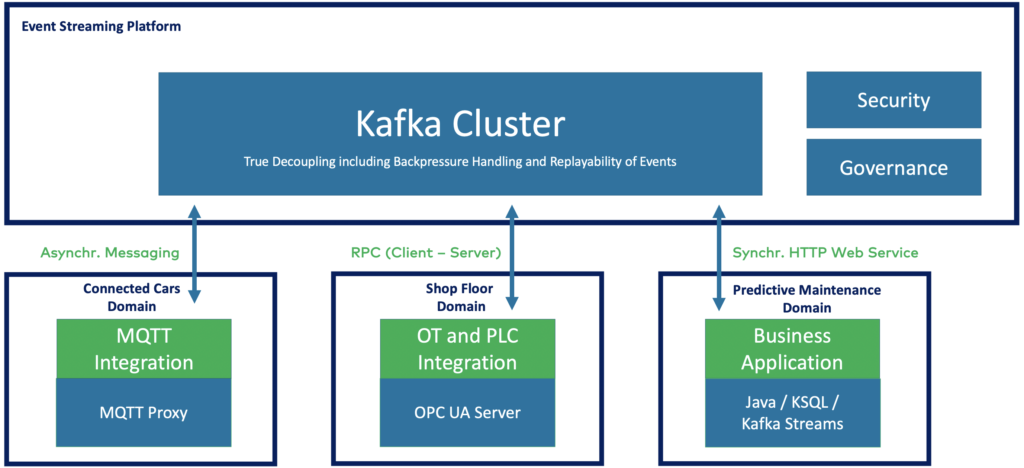

Decentralization with a data mesh

There is no single technology or product for a data mesh! But the heart of a decentralized data mesh infrastructure must be real-time, reliable, and scalable.

Data streaming with Apache Kafka is the perfect foundation for a data mesh: Dumb pipes and smart endpoints truly decouple independent applications. This domain-driven design allows teams to focus on data products:

Contrary to a data lake or date warehouse, the data streaming platform is real-time, scalable and reliable. A unique advantage for building a decentralized data mesh.

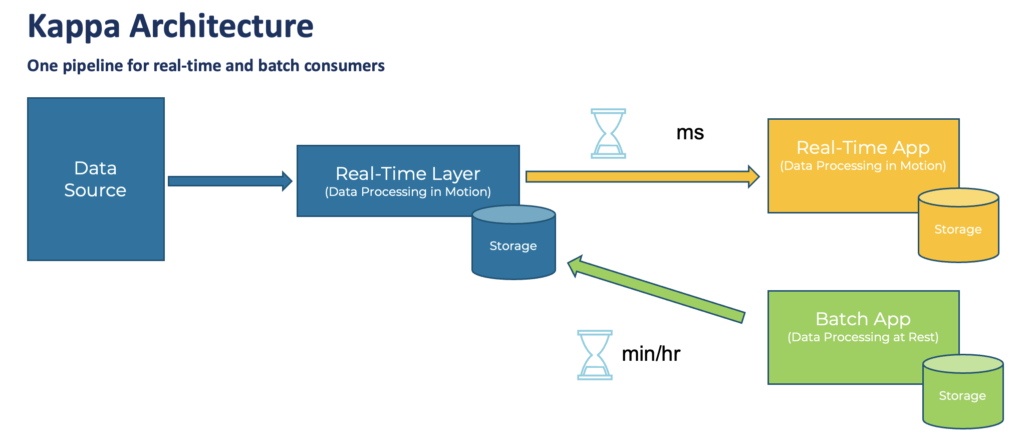

Kappa architecture replacing Lambda

The Kappa architecture is an event-based software architecture that can handle all data at all scale in real-time for transactional AND analytical workloads.

The central premise behind the Kappa architecture is that you can perform real-time and batch processing with a single technology stack. The heart of the infrastructure is streaming architecture.

Unlike the Lambda Architecture, in this approach, you only re-process when your processing code changes and need to recompute your results.

I wrote a detailed article with several real-world case studies exploring why “Kappa Architecture is Mainstream Replacing Lambda“.

Global data streaming

Multi-cluster and cross-data center deployments of Apache Kafka have become the norm rather than an exception.

Several scenarios require multi-cluster Kafka deployments with specific requirements and trade-offs, including disaster recovery, aggregation for analytics, cloud migration, mission-critical stretched deployments, and global Kafka.

Learn all the details in my article “Architecture patterns for distributed, hybrid, edge and global Apache Kafka deployments“.

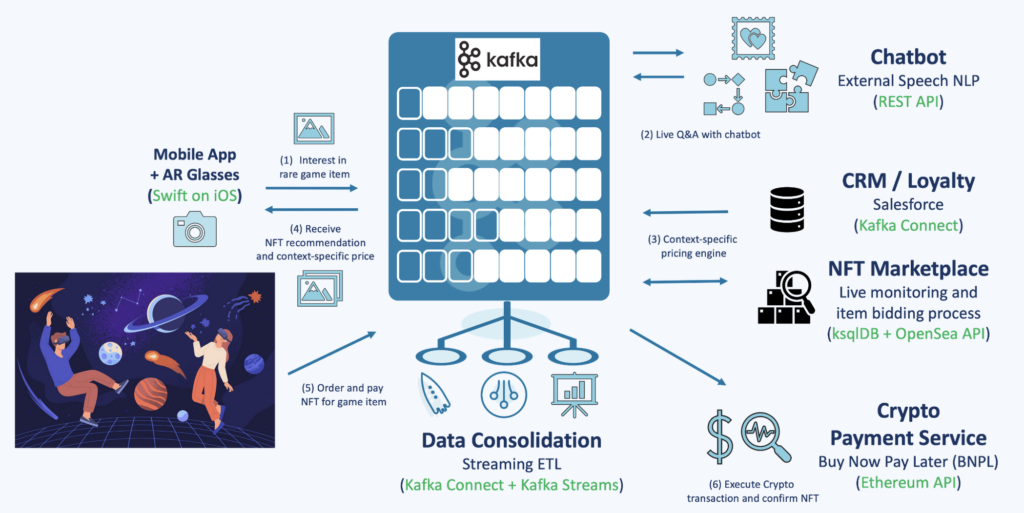

Natural language processing (NLP) with data streaming for real-time Generative AI (GenAI)

Natural Language Processing (NLP) helps many projects in the real world for service desk automation, customer conversation with a chatbot, content moderation in social networks, and many other use cases. Generative AI (GenAI) is “just” the latest generation of these analytic models. Many enterprises have combined NLP with data streaming for many years for real-time business processes.

Apache Kafka became the predominant orchestration layer in these machine learning platforms for integrating various data sources, processing at scale, and real-time model inference.

Here is an architecture that shows how teams easily add Generative AI and other machine learning models (like large language models, LLM) to their existing data streaming architecture:

Time to market is critical. AI does not require a completely new enterprise architecture. The true decoupling allows the addition of new applications/technologies and embedding them into the existing business processes.

An excellent example is Expedia: The online travel company added a chatbot to the existing call center scenario to reduce costs, increase response times, and make customers happier. Learn more about Expedia and other case studies in my blog post “Apache Kafka for Conversational AI, NLP, and Generative AI“.

New customer stories of digital natives using data streaming

So much innovation is happening with data streaming. Digital natives lead the race. Automation and digitalization change how tech companies create entirely new business models.

Most digital natives use a cloud-first approach to improve time-to-market, increase flexibility, and focus on business logic instead of operating IT infrastructure. And elastic scalability gets even more critical when you start small but think big and global from the beginning.

Here are a few customer stories from worldwide telecom companies:

- New Relic: Observability platform ingesting up to 7 billion data points per minute for real-time and historical analysis.

- Wix: Web development services with online drag & drop tools built with a global data mesh.

- Apna: India’s largest hiring platform powered by AI to match client needs with applications.

- Expedia: Online travel platform leveraging data streaming for a conversational chatbot service incorporating complex technologies such as fulfillment, natural-language understanding, and real-time analytics.

- Alex Bank: A 100% digital and cloud-native bank using real-time data to enable a new digital banking experience.

- Grab: Asian mobility service that built a cybersecurity platform for monitoring 130M+ devices and generating 20M+ Al-driven risk verdicts daily.

Resources to learn more

This blog post is just the starting point. Learn more about data streaming and digital natives in the following on-demand webinar recording, the related slide deck, and further resources, including pretty cool lightboard videos about use cases.

On-demand video recording

The video recording explores the telecom industry’s trends and architectures for data streaming. The primary focus is the data streaming case studies. Check out our on-demand recording:

Slides

If you prefer learning from slides, check out the deck used for the above recording:

Fullscreen ModeData streaming case studies and lightboard videos of digital natives

The state of data streaming for digital natives in 2023 is fascinating. New use cases and case studies come up every month. This includes better data governance across the entire organization, real-time data collection and processing data from network infrastructure and mobile apps, data sharing and B2B partnerships with new business models, and many more scenarios.

We recorded lightboard videos showing the value of data streaming simply and effectively. These five-minute videos explore the business value of data streaming, related architectures, and customer stories. Stay tuned; I will update the links in the next few weeks and publish a separate blog post for each story and lightboard video.

And this is just the beginning. Every month, we will talk about the status of data streaming in a different industry. Manufacturing was the first. Financial services second, then retail, telcos, digital natives, gaming, and so on…

Let’s connect on LinkedIn and discuss it! Join the data streaming community and stay informed about new blog posts by subscribing to my newsletter.