The Fourth Industrial Revolution (also known as Industry 4.0) is the ongoing automation of traditional manufacturing and industrial practices using modern smart technology. Event Streaming with Apache Kafka plays a key role in processing massive volumes of data in real-time in a reliable, scalable, and flexible way of integrating with various legacy and modern data sources and sinks. This blog post explores Apache Kafka’s relationship to modern telco infrastructures that leverage private 5G campus networks for Industrial IoT (IIoT) and edge computing.

Event Streaming with Kafka at the Disconnected Edge

Apache Kafka is the new black at the edge.

This is true not just for obvious verticals such as manufacturing, oil&gas, and the automotive industry. Other industries, including retail, healthcare, government, financial services, and energy, leverage Apache Kafka to take advantage of IoT devices, sensors, smart machines, robotics, and connected data.

This post focuses on the autonomous (and sometimes disconnected) edge. This means the edge sites required good, stable network communication, but not necessarily stable and low latency connectivity to the remote data center or cloud. The autonomous or disconnected edge needs to operate continuously even if the connection to the internet is broken. The below example utilizes smart factories, but the same use cases are deployed across many other scenarios, including restaurants, retail stores, and hospitals.

This post does NOT explore the connected edge with use cases such as V2X (vehicle-to-everything) and standards such as C-V2X (Cellular / 5G) by 5GAA. V2X and all the use cases around mobility services and smart cities will be explored in another post. This topic is very different, e.g., because there is no stable internet connection and you (have to) leverage standards such as MQTT in conjunction with Kafka. Obviously, plenty of very relevant use cases exist here, too. Subscribe to my newsletter to stay updated with new blog posts!

Why is 5G a Game Changer for Industrial IoT, Automotive, and Smart City?

5G is the fifth generation technology standard for broadband cellular networks. Many people wonder why there is such a hype around 5G.

What actually is 5G?

I cannot tell you all the technical details. But on a high level from a use case perspective, it is important to understand that 5G is much more than just higher speed and lower latency:

- Public 5G telco infrastructure: That’s what Verizon, AT&T, T-Mobile, and all the other telco providers talk about in their TV spots. The end consumer gets higher download speeds and lower latency (at least in theory). This infrastructure integrates vehicles (e.g., cars) and devices (e.g., mobile phones) to the 5G network (V2N).

- Private 5G campus networks: That’s what many enterprises are most interested in. The enterprise can setup private 5G networks with guaranteed quality of service (QoS) using acquired 5G slices from the 5G spectrum. Enterprise work with telco providers, telco hardware vendors, and sometimes also with cloud providers (e.g., AWS Wavelength). This infrastructure is used similarly to the public 5G but deployed, e.g., in a factory or hospital. The trade-offs are guaranteed SLAs and increased security vs. higher cost.

- Direct connection between devices: That’s for interlinking the communication between two or more vehicles (V2V) or vehicles and infrastructure (V2I) via unicast or multicast. There is no need for a network hop to the cell tower due to using a 5G technique called 5G sidelink communications. This enables new use cases, especially in safety-critical environments (e.g., autonomous driving) where Bluetooth, Wi-Fi, and similar network communications do not work well for different reasons.

As I mentioned before, this post focuses on architectures for private 5G campus networks and their relation to the public 5G infrastructure. V2X, including all the connected mobility services, will be covered in other posts.

5G for Wide-Area, Local-Area, and Personal-Area Communication

In conclusion about the 5G hype: “Instead of providing a different radio interface for every use case, device vendors could rely solely on 5G as the link for wide-area, local-area, and personal-area communications“, as explained in a great 5G blog post from Benny Vejlgaard (Nokia).

Let’s now see how 5G infrastructures are related to event streaming with Apache Kafka.

Multi-Access Edge Computing (MEC)

Multi-access edge computing (MEC) is another important term in this context. MEC was formerly called mobile edge computing. It is an ETSI-defined network architecture concept that enables cloud computing capabilities and an IT service environment at the edge of the cellular network. Hence, data processing n general is closer at the edge of any network.

The basic idea behind MEC is that by running applications and performing related processing tasks closer to the cellular customer, network congestion is reduced and applications perform better. MEC technology is designed to be implemented at the cellular base stations or other edge nodes. It enables flexible and rapid deployment of new applications and services for customers. Combining elements of information technology and telecommunications networking, MEC also allows cellular operators to open their radio access network (RAN) to authorized third parties, such as application developers and content providers.

The use cases overlap with what you can read about 5G. So I focus on the term 5G in this blog post. However, the concept of MEC is equally relevant.

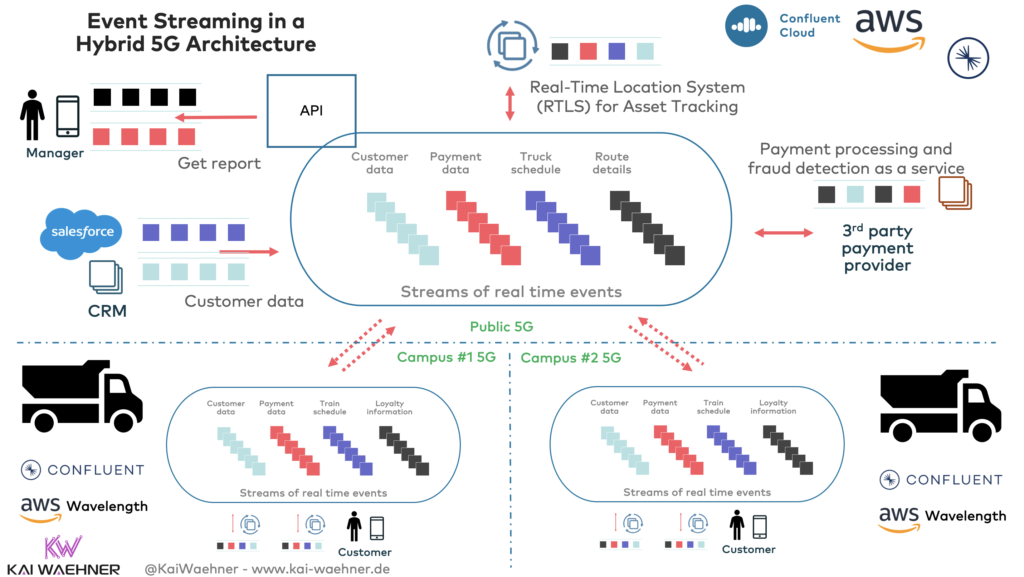

Event Streaming in a Hybrid 5G Architecture

Industry 4.0 is all about processing high volumes of data in real-time. That’s obviously a perfect fit for Apache Kafka. Please note that Apache Kafka is NOT used for “hard real-time” but only for soft real-time. If you need zero latency for embedded systems, PLCs, and robots, that’s assembler or MISRA C, not Java and Kafka. Kafka is a perfect fit for any use case where an end-to-end latency of 10+ms is good enough. This is almost all IT use cases, but not OT use cases.

The following shows a high-level hybrid 5G architecture. It combines cloud computing with edge processing in 5G campus networks installed in smart factories:

Some notes on the picture:

- Most enterprise applications, such as the Kafka-based real-time location system (RTLS), run in a data center or public cloud. They use public 5G networks or any other stable internet connection.

- Each smart factory has a dedicated 5G campus network. These 5G slices provide guaranteed QoS. Various deployment options exist for 5G networks. All have their pros and cons regarding cost, bandwidth, latency, cost, and SLAs. In this example, the combination of a Telco provider and AWS Wavelength is used to enable an edge infrastructure with stable 5G processing and compute power to deploy Apache Kafka and other applications close to the production line in the plant within AWS EC2 instances.

- The integration between edge sites and the central data center or cloud is implemented with Kafka-native real-time technologies such as MirrorMaker 2 or Confluent’s Cluster Linking. This enables decoupled infrastructures and high throughput, guaranteed ordering, real-time replication, and out-of-the-box error handling. These are key characteristics: Each smart factory runs mission-critical workloads disconnected from the cloud.

Let’s now dig a little bit deeper into a smart factory to understand how edge computing works in this example.

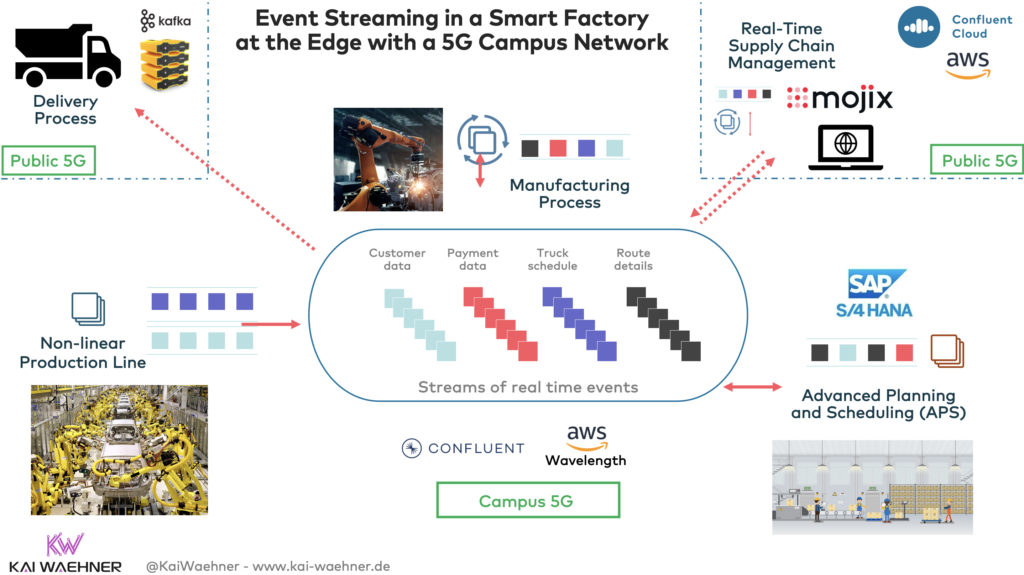

Apache Kafka in Smart Factory at the Edge with a 5G Campus Network

The following picture shows the event streaming infrastructure inside a smart factory:

Some notes on this architecture:

- All the mission-critical workloads on the production line at the edge can operate without a connection to the internet. This includes processing on the production lines and analytics such as predictive maintenance or real-time dashboards for the on-site plant manager. The infrastructure runs 24/7, even if the location is offline and not connected to the public internet. This is not just about the outage of a data center or cloud! Often, applications in Industrial IoT(IIoT) are disconnected intentionally to provide a more secure environment.

- Some applications run in the remote data center or cloud. They continuously consume relevant data from the smart factory in real-time. After a disconnection, they fall behind. As soon as they get connected again, they consume all missed data and go back to real-time updates.

- In this example, Mojix, a Kafka-native supply chain management service, is deployed in the cloud. Obviously, if these supply chain processes are critical for the production line, the architecture would either include a direct, stable connection to the cloud (e.g., AWS Direct Connect or Azure ExpressRoute) or also be deployed in the smart factory. Kafka allows a flexible, hybrid architecture where applications can live where it makes the most sense from a technical and business perspective.

- A supply chain is complex. It includes much more than just the production lines and MES/ERP/APS systems in the smart factory. Integration to enterprise IT systems in the data center AND integration with suppliers and partners is key for success. Event Streaming with Apache Kafka plays a huge role in many postmodern supply chain architectures.

5G is the Future for many Edge and Hybrid Kafka Use Cases in Industry 4.0

Apache Kafka plays a key role in processing massive volumes of data in real-time in a reliable, scalable, and flexible way. This is relevant across industries for Industry 4.0 use cases. Public and private 5G networks enable the next generation of Industrial IoT, edge computing, and real-time use cases across verticals.

At the beginning of 2021, we are still in the early stage of 5G infrastructures. But first enterprises already work with telco providers to build great use cases with 5G and event streaming.

What are your experiences and plans with private and public 5G infrastructures? Do you plan to use Apache Kafka at the edge, too? Which approach works best for you? What is your strategy? Check out the “Infrastructure Checklist for Apache Kafka at the Edge” if you plan to go that direction!

Let’s connect on LinkedIn and discuss it! Also, stay informed about new blog posts by subscribing to my newsletter.