Shift Left Architecture for AI and Analytics with Confluent and Databricks

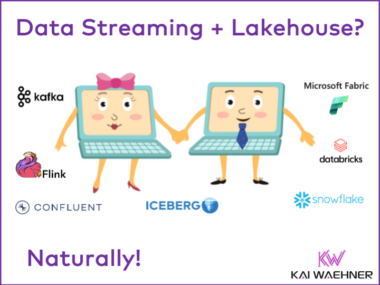

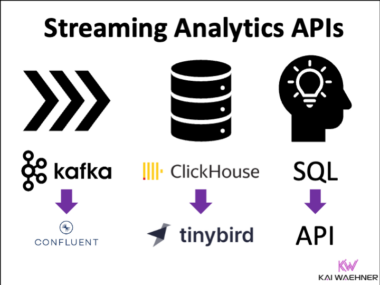

Confluent and Databricks enable a modern data architecture that unifies real-time streaming and lakehouse analytics. By combining shift-left principles with the structured layers of the Medallion Architecture, teams can improve data quality, reduce pipeline complexity, and accelerate insights for both operational and analytical workloads. Technologies like Apache Kafka, Flink, and Delta Lake form the backbone of scalable, AI-ready pipelines across cloud and hybrid environments.